Arguments for the slurm external scheduler – HP XC System 4.x Software User Manual

Page 54

options that specify the minimum number of nodes required for the job, specific nodes for the

job, and so on.

Note:

The SLURM external scheduler is a plug-in developed by Platform Computing for LSF; it is not

actually part of SLURM. This plug-in communicates with SLURM to gather resource information

and request allocations of nodes, but it is integrated with the LSF scheduler.

The format of this option is shown here:

-ext "SLURM[slurm-arguments]"

The bsub command format to submit a parallel job to an LSF allocation of compute nodes using

the external scheduler option is as follows:

bsub -n num-procs -ext "SLURM[slurm-arguments]"

[bsub-options]

[ -srun

[srun-options]

]

[jobname] [job-options]

The slurm-arguments parameter can be one or more of the following srun options, separated

by semicolons, as described in

.

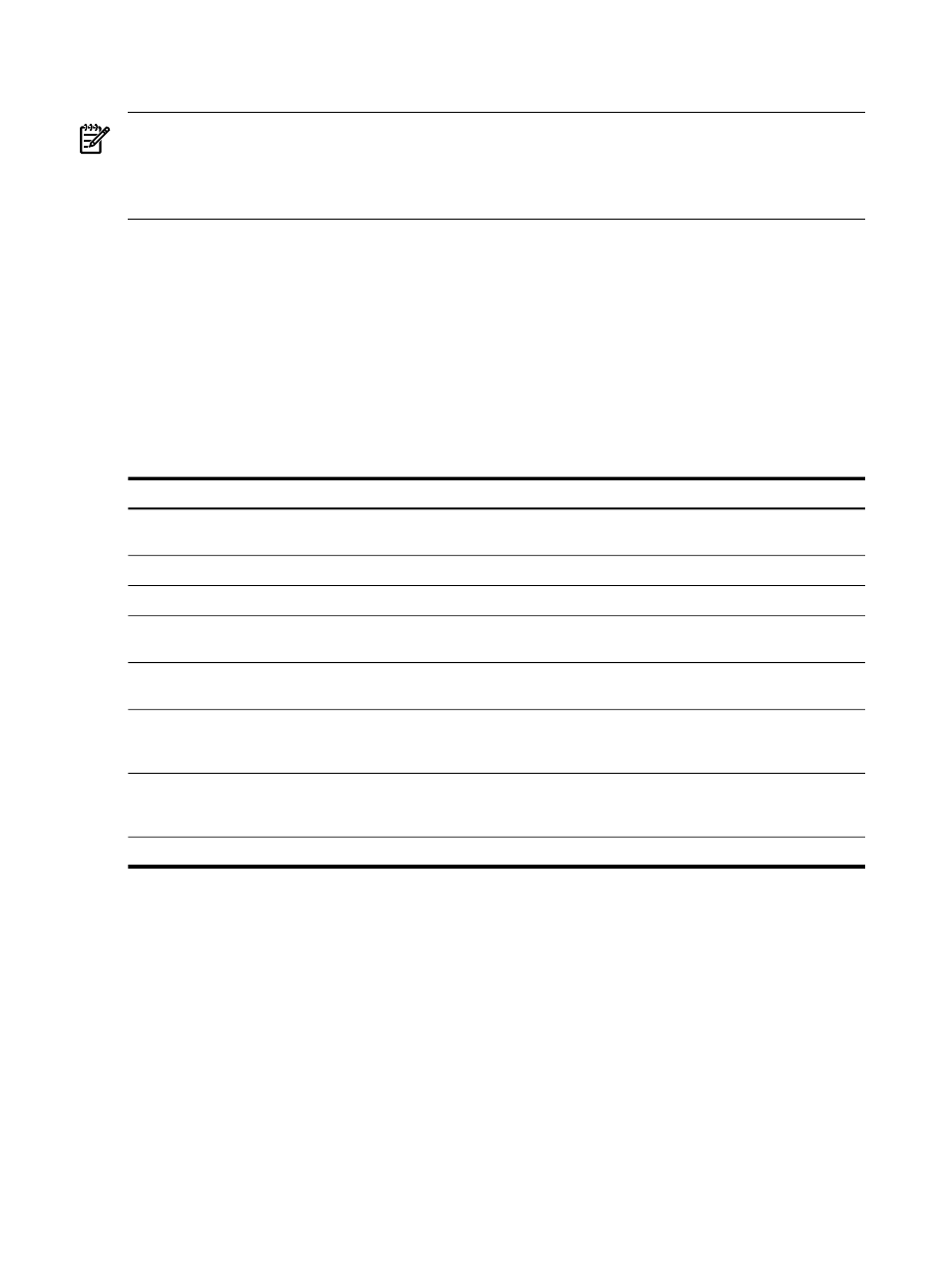

Table 5-1 Arguments for the SLURM External Scheduler

Function

SLURM Arguments

Specifies the minimum and maximum number of nodes allocated to job.

The job allocation will contain at least the minimum number of nodes.

nodes=min[-max]

Specifies minimum number of cores per node. Default value is 1.

mincpus=ncpus

Specifies a minimum amount of real memory in megabytes of each node.

mem=value

Specifies a minimum amount of temporary disk space in megabytes of each

node.

tmp=value

Specifies a list of constraints. The list may include multiple features

separated by “&” or “|”. “&” represents ANDed, “|” represents ORed.

constraint=feature

Requests a specific list of nodes. The job will at least contain these nodes.

The list may be specified as a comma-separated list of nodes or a range of

nodes

nodelist=list of nodes

Requests that a specific list of hosts not be included in resource allocated

to this job. The list may be specified as a comma-separated list of nodes or

a range of nodes.

exclude=list of nodes

Requests a mandatory contiguous range of nodes.

contiguous=yes

The Platform LSF documentation provides more information on general external scheduler

support.

Consider an HP XC system configuration in which lsfhost.localdomain is the LSF execution

host and nodes n[1-10] are compute nodes in the lsf partition. All nodes contain two cores,

providing 20 cores for use by LSF jobs.

shows one way to submit a parallel job to run on a specific node or nodes.

54

Submitting Jobs